3R in preclinical research

3. First steps taken towards data networking

The industry is making a pioneering contribution towards reducing animal experiments

In a highly regarded project, four pharmaceutical companies have joined forces with support from the European Federation of Pharmaceutical Industries and Associations (EFPIA) to launch a voluntary, non-profit initiative for the provision of data by the industry, in collaboration with the European Chemicals Agency (ECHA). The aim is to make high-quality, previously unpublished physical-chemical, toxicological and ecotoxicological substance data publicly available from the companies’ archives. Thanks to the extended access to chemical safety data, the effectiveness of database tools for predicting the properties of chemical substances is being improved. This data can also be used by experts from academia and research-based pharmaceutical companies to create models in order to gradually reduce or completely eliminate animal experiments for chemical substances. The ECHA has already agreed to support this initiative, act as a broker and provide a neutral platform for disseminating this data. A test phase is currently underway, in which the ECHA, EFPIA, Boehringer Ingelheim, F. Hoffmann-La Roche, Johnson & Johnson and Merck KGaA are taking part. The aim is to create a programme in which additional companies can participate and make their archive data available.

Advancing the 3Rs with data platforms

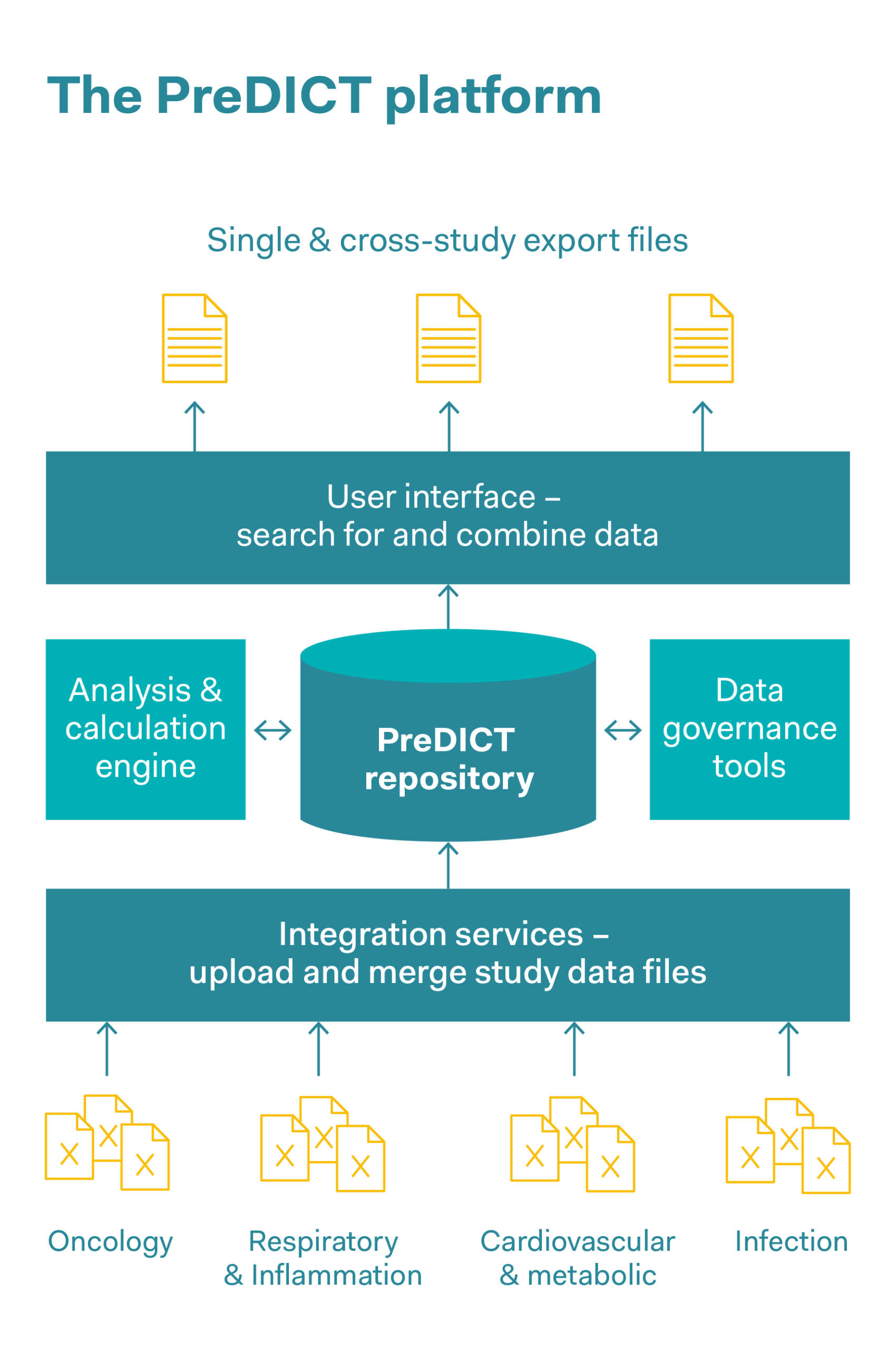

These days, complex datasets in pharmaceutical companies are often captured, stored and jointly used in the form of spreadsheets. AstraZeneca’s PreDICT (Preclinical Data Integration and Capture Tool) platform was developed in collaboration with the data analysis firm Tessella. This involved first of all defining a series of data standards with which in vivo study data from all areas of research can be represented clearly and comprehensively. Preclinical data was captured and analysed, including pharmacokinetic data (this data describes all the processes to which a medicinal substance is subjected in the body, such as the absorption of the substance, its distribution within the body and its biochemical modification and decomposition), pharmacodynamic data (data on adverse effects and the correct dosage in order to achieve the desired effect within the body), as well as efficacy data. The system ensures data integrity and enables scientists to rapidly find, integrate and jointly use in vivo datasets to predict optimal dosages and schedules in clinical trials. As well as saving time, reducing outsourcing costs and simplifying workflows, a significant improvement in data quality and increased confidence in the data were observed. This rapid access to high-quality data makes it significantly easier for researchers to develop models for the behaviour of drugs in real organisms. The predictions produced by these models are also intended to completely replace some in vivo experiments, or to obtain the maximum findings per animal and reduce the number of animals used.

Reduce

The ability to access a large amount of data helps researchers to optimise how they design experiments so that the maximum findings can be obtained for each animal used. Access to archived data helps to avoid in vivo experiments that have already been carried out and reduce the number of animals used in new studies, for example by reusing control group data from earlier comparable studies.

Replace

Rapid access to high-quality data makes it easier for researchers to model the behaviour of active substances in real organisms. The better the in vivo data is maintained, the more likely it is that reliable model predictions will be made that could replace in vivo experiments.

Refine

The database makes it possible to combine datasets from many studies to form a meta-analysis, and provides a comprehensive insight into the way animals are used. This helps to refine in vivo experiments.

Using big data to improve research and patient safety

Supported by various pharmaceutical companies and academic partners, technology company GMV has created a biomedical data technology platform as part of the eTRANSAFE project. This platform is intended to improve the development of new drugs and make them safer for patients. The overarching goal of eTRANSAFE was to drastically improve the predictability, feasibility and reliability of safety assessments during the drug development process. This was achieved through the development of the eTRANSAFE ToxHub platform, which brings together preclinical and clinical databases in an integrative data infrastructure, combined with innovative computational and visualisation tools. This generated a sufficiently large quantity of biomedical data in order to draw conclusions using big-data technology. The benefits of the project include more efficient studies, shorter research times and better toxicity results. Part of the project compares preclinical and clinical studies to better predict what happens in humans in the clinical phase.

eTRANSAFE – an Innovative Medicines Initiative project

eTRANSAFE was developed as part of the «Innovative Medicines Initiative (IMI)», Europe’s largest public-private initiative, which is supported by the European Union’s Horizon 2020 research and innovation programme and the European Federation of Pharmaceutical Industries and Associations (EFPIA). The project aimed to make a large quantity of preclinical and clinical data available, while adhering to data governance procedures. One prerequisite was the availability of relevant, high-quality datasets. But in order to use this data optimally, major challenges had to be overcome, such as encouraging the exchange of information between competing organisations and fostering the appropriate control, standardisation and annotation of data quality.